Introduction

Finding useful information in PDF documents can be tough and time-consuming. Traditional methods of searching through PDFs manually are becoming outdated. Thankfully, Artificial Intelligence (AI) tools like Gemini and Vertex AI are making it easier to get answers from PDFs.

In this blog, we’ll explore how these AI-powered tools make it easier to find the information you need from PDFs. With their advanced capabilities, you can ask questions in natural language and get accurate answers directly from the documents. Let’s dive into the world of PDF data Question Answering (QA) and see how Vertex AI & Gemini are changing the game.

Prerequisites

- Google Cloud Project

- Input file(PDF) uploaded in Google Cloud storage bucket

Steps:

Step 1:

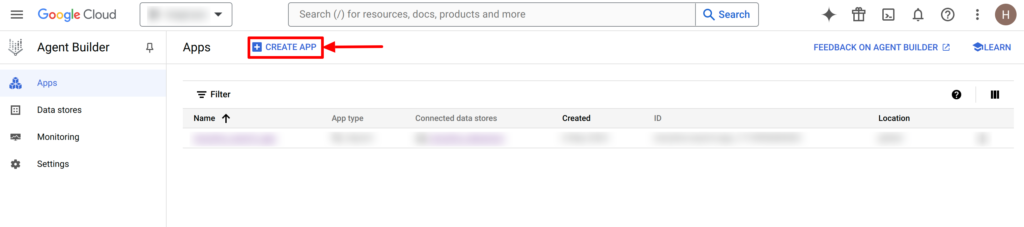

The first step is to create a Vertex AI Search App.

- We need to enable the “Discovery Engine API” to create a search App.

- Once we have enabled the API it will redirect us to the Agent Builder page. Now click on “CREATE APP”.

- Then select App Type as “Search”.

- After selecting the App Type, configure the search app. Choose “Generic” for content type.

-

-

- We can enable the “Enterprise edition features” and “Advance LLM feature” as required.

- Provide a name to your search app.

- Provide the company name and select the location for the search app from the dropdown.

-

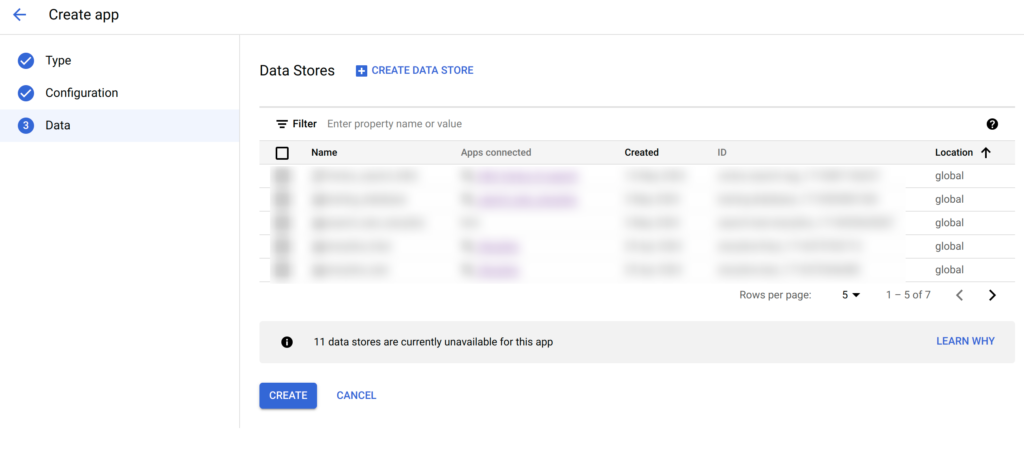

- After creating the search app we need to connect it with the data store.

- We can use the previously created data store or create a new one. To create the new data store click on “CREATE DATA STORE”.

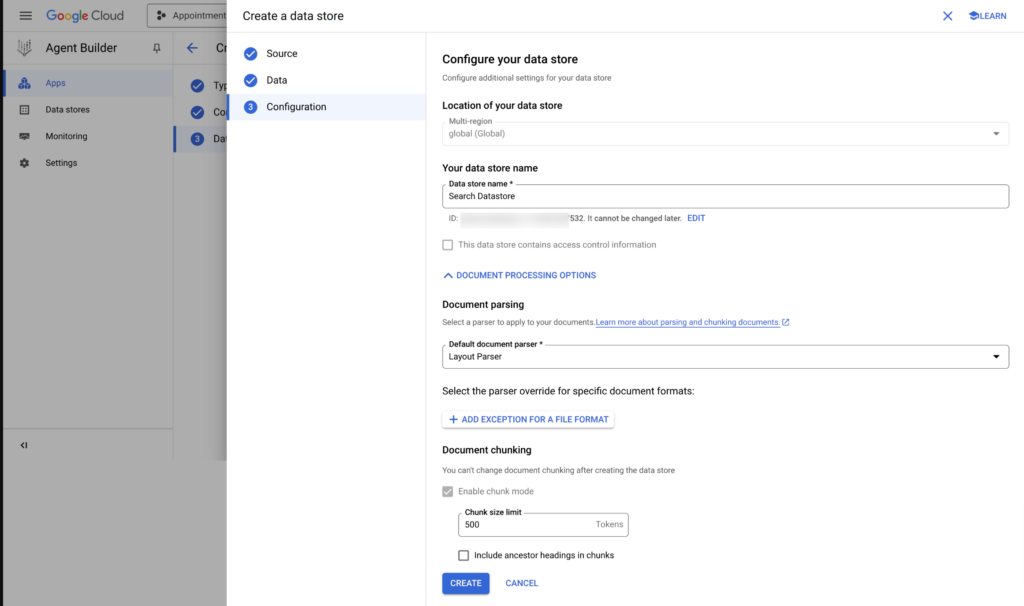

- While creating the datastore select the GCP bucket path or file on which you want to perform the question-answering.

- Give the name to your data store and choose the document parser as per your file format.

- Here we want chunks from our file to perform question-answering so we have chosen “Layout Parser” for this search app.

- Choose the chunk size limit, which must be less than or equal to 500.

- Once you click the “CREATE” button, the datastore will be created with its unique ID.

- Now Select the created datastore to connect it with a search app. We can connect more than one data store with a search App.

Step 2:

- Install the Google Cloud SDK if you haven’t already. You can download it from Google Cloud SDK: (https://cloud.google.com/sdk/docs/install-sdk#deb).

- After installing the SDK, create your credentials file by running the following command in your terminal:

gcloud auth application-default login

- Set up the project using the following command in your terminal.

[1] Initialize the gcloud CLI:

gcloud init

[2] Now add a quota project in application default credentials (ADC):

gcloud auth application-default set-quota-project PROJECT_ID

Follow the prompts to authenticate and authorize the application: Set up Application Default Credentials

Step 3:

Now install all the required libraries for our project, as described in the following command:

pip install requests

pip install google-auth

pip install google-cloud-aiplatform

Next, we will load the required libraries and initialize the project details.

# Load required library

import requests

from google.auth import default

from google.auth.transport.requests import Request

import vertexai

from vertexai.generative_models import GenerativeModel

import vertexai.preview.generative_models as generative_models

#Project confuguration

project_id = "YOUR PROJECT ID"

datastore_id = "YOUR DATASTORE ID"

Make sure to replace the “YOUR PROJECT ID” and “YOUR DATASTORE ID” variables with your own Project ID and datastore ID which you have generated in Step 1.

Step 4:

Here, we are going to perform the search on the vertex AI search app and get the relevant chunks as per the user’s query.

For this blog, we’ve chosen a research paper in PDF format for our Question and Answer (QnA) tasks. The paper, titled “DEEP LEARNING APPLICATIONS AND CHALLENGES IN BIG DATA ANALYTICS,” can be accessed through the link below.

https://journalofbigdata.springeropen.com/articles/10.1186/s40537-014-0007-7

First, we need an access token for our current Default Application. To get this token, we will use the function provided below.

# function to get the access token

def get_access_token():

creds, project = default()

creds.refresh(Request())

return creds.token

Here is the function to get the search results from the Vertex AI search app.

def search_datastore(project_id, datastore_id, query,access_token):

url = f"https://discoveryengine.googleapis.com/v1alpha/projects/{project_id}/locations/global/collections/default_collection/dataStores/{datastore_id}/servingConfigs/default_search:search"

headers = {

"Authorization": f"Bearer {access_token}",

"Content-Type": "application/json",

"X-Goog-User-Project": project_id

}

payload = {

"query": query,

"pageSize": 2,

"contentSearchSpec": {

"searchResultMode": "CHUNKS",

"chunkSpec": {

"numPreviousChunks": 1,

"numNextChunks": 1

}

}

}

response = requests.post(url, headers=headers, json=payload)

if response.status_code == 200:

print("Vertex Search Response>>>>>>>>>>>>>>>",response.json())

return response.json()

else:

print(f"Error: {response.status_code}")

return None

We are sending the JSON-formatted search response containing relevant chunks to Gemini for generating answers.

Here is the sample search response for the query.

Here, we have returned the two matched search results for the query, we can modify the number of results by updating the value of pageSize in the request payload. Other than that, we can also get the previous and next chunks of the matched search results.

Since Vertex AI Search provides chunks of responses rather than exact answers to queries, in the next step, we will use the Gemini API to extract precise answers to the given questions.

Step 5:

Now, we will perform a Gemini API request to get the answer to the user query from the received matching chunks.

We are providing the custom prompt to the Gemini API request to make the result more relevant and accurate. We will use the user’s query and vertex AI search response to prepare a prompt.

# prepare prompt for Gemini request

def get_prompt(user_query, context):

prompt = f"""You are a search service who helps the users, you need to provide relevant answer to the users. You have been provided with a question and related Vertex Search Service response. You have to analyze the whole Vertex Search Service response and find relevant answer according to the question.

Vertex Search Service response: {context}

Question: {user_query}

If you are not able to understand the question, then ask the User to rephrase it.

Answer:"""

return prompt

Next, we will pass the prompt to Gemini and return the answer.

# Generate Response using Gemini API request

def get_response_from_gemini(prompt,project_id):

generation_config = {

"max_output_tokens": 2048,

"temperature": 0.4,

"top_p": 0.4,

"top_k": 32,

}

safety_settings = {

generative_models.HarmCategory.HARM_CATEGORY_HATE_SPEECH: generative_models.HarmBlockThreshold.BLOCK_MEDIUM_AND_ABOVE,

generative_models.HarmCategory.HARM_CATEGORY_DANGEROUS_CONTENT: generative_models.HarmBlockThreshold.BLOCK_MEDIUM_AND_ABOVE,

generative_models.HarmCategory.HARM_CATEGORY_SEXUALLY_EXPLICIT: generative_models.HarmBlockThreshold.BLOCK_MEDIUM_AND_ABOVE,

generative_models.HarmCategory.HARM_CATEGORY_HARASSMENT: generative_models.HarmBlockThreshold.BLOCK_MEDIUM_AND_ABOVE,

}

vertexai.init(project= project_id, location="YOUR PROJECT LOCATION")

model = GenerativeModel("gemini-1.0-pro-vision-001")

responses = model.generate_content(

[prompt],

generation_config=generation_config,

stream=True,

safety_settings=safety_settings

)

answer= ''

for response in responses:

answer += response.text

Return answer

Step 6:

We are now ready to conduct Question and Answer (Q&A) sessions using the PDF data. To ask a question, include the following lines of code in your script:

# Take the user input and call the function to generate output

while True:

user_query = input("Enter your search query here: ")

context = search_datastore(project_id, datastore_id, user_query,access_token)

prompt = get_prompt(user_query, context)

try :

response = get_response_from_gemini(prompt,project_id)

except:

response = "Gemini request failed."

print(f"Answer: {response}\n\n")

Test Results:

Here are some test examples demonstrating how our Q&A system manages different questions and produces responses.

Q1: What are the 2 main focuses of the paper?

Q2: What is HOG?

Q3: Provide me the 4 Vs of Big Data characteristics.

Q4: Who introduced mSDAs?

Q5: What is the main concept in deep learning algorithms?

Conclusion

In this blog, we’re looking at how Vertex AI search and Gemini team up to make question-answering systems better. By combining their strengths, AI gets much better at understanding and answering questions.

Vertex AI search optimizes how quickly and relevant information is retrieved, making interactions faster. Meanwhile, Gemini smartly balances the retrieval and generation processes, ensuring each answer is not only rapid but also contextually appropriate.

Looking to Implement a PDF QnA System with AI?

Want to turn PDFs into smart QnA systems? Let’s help you build AI-powered solutions with Vertex AI and Gemini!